Difference-in-Difference

January 04, 2022, Evaluation Observatory

Consider the case of a road repair program whose objective is to improve the population's access to labour markets. An outcome indicator to measure this would be the employment rate within the population. If we simply observe before and after changes in employment rates, it is unlikely, that changes pre and post the road repair program would give us an idea of the attributable impact, as several other factors - such as education levels, and access to networks - also known as confounders, are likely to influence the employment rate (Gertler Paul et al., 2012, p. 130) . And, although RCT's or experimental methods are considered the gold standard design to infer causal attribution; given the treatment was not administered through random assignment, neither would an RCT be possible.

In this scenario, a difference in difference approach becomes useful.

The difference-in-difference design is quasi-experimental design that compares the changes in outcomes over time between a population enrolled in a program - i.e. the treatment group - and a population that is not i.e. the comparison group1. The comparison group is usually constituted using 'matching methods' i.e. matching the counterfactual with the treatment., on a set of defining key parameters, for instance, gender, education levels etc., to adjust for confounding effects.

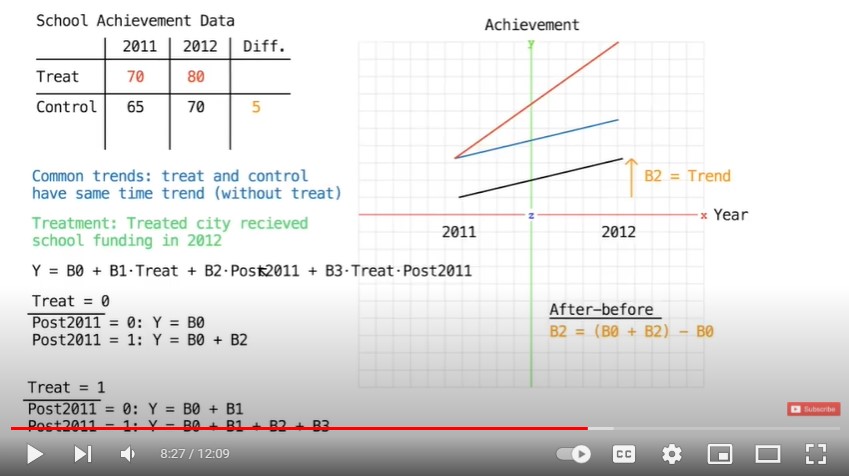

First, we calculate the difference in the before-and-after outcomes for the enrolled group, which controls for factors that are constant in the group, over a period of time. Next, we calculate the difference in the before-and-after outcomes for the non-enrolled group, which captures the factors that vary over time. If we take the difference of these differences in outcome indicators, we would be able to accurately measure the impact by eliminating bias.1

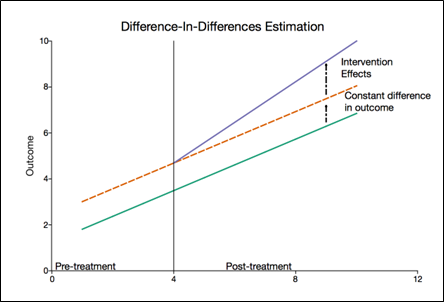

Diff-in-diff approach compares trends between the treatment and comparison group instead of comparing outcomes.1 This can be understood with the help of the following diagram.

Source: (Eric, 2019)2

An essential assumption of this approach is that differences between the treatment and comparison groups are constant over time, and hence it is crucial to test the validity of the equal trends assumption in Difference-in-Differences.1 For a comprehensive understanding of the topic, please refer to recommended resources given below.

List of recommended resources:

For a broad overview

-

Published by World Bank, this resource gives an overview of the method and its implementation. It also highlights the key-assumptions of this method.

-

Presented by Prashant Bharadwaj of the University of California, this resource explains the diff-in-diff method using the Change in Marriage Laws in Missisipi, USA on education, fertility and marriages, as a case study.

-

Published by APTECH, this resource explains the concept of Difference-in-Differences Estimation and illustrates the use of this method with the help of case examples.

-

Written by Anders Fredriksson, Gustavo Oliveira, this paper presents the Difference-in-Differences method in an accessible language to a broad range of management scholars interested in applying impact evaluation methods. The paper describes the method, assumptions, and regression specifications using numerous examples.

-

This document by Princeton University is a step-by-step guide on using Stata for Difference-in-Difference. It guides practitioners on using various commands in Stata for estimating impact. You can view a similar document for the commands using R, here: Differences?in?Differences (using R)

-

Developed by the Partnership for economic policy (PEP), this video series gives a conceptual overview of the diff-in-diff method and explains the various underlying assumptions required for the technique's validity. It also illustrates the estimation of the diff-in-diff method using an example.

-

Developed by EU Science Hub, this video explains why diff-in-diff is a powerful 'Counterfactual Impact Evaluation' method to decide the most effective projects which give good value for money.

-

Published by Empiricist Academy, this animation illustrates how to obtain the differences-in-differences estimate using a regression model.

-

Presented by Andrew Heiss, this video series provides an introduction to the method and its various assumptions. It also consists of a series ofhas five lecture on using R for difference in difference method

For in depth understanding

-

Published by World Bank group, Chapter 7 of the book has detailed discussion of the Difference-in-Difference method. It gives the rationale to the use of the method, its utility, process and assumptions. It also highlights the methods limitations and provides practitioners with a checklist for effective impact measurement.

Case Study

-

Published by Care Evaluations, this case study evaluates the differences in outcomes and processes of the gender-transformative EKATA approach compared against a standard “Gender Light” approach in the outcome areas of gender equality, and food security and economic well-being.

-

Written by Ken Njogu Mwangi, this paper evaluates whether Fruiting Africa Project (FAP)--an agroforestry project implemented by World Agroforestry Centre (ICRAF), made a difference in the livelihoods of beneficiaries using the difference in difference method.

-

Published by National Centre for Biotechnology Information, this paper uses the difference-in-difference estimation approach to explore the self-selection bias in estimating the effect of neighbourhood economic environment on self-assessed health among older adults.

Further Reading

-

Published by the National Bureau of Economic Research and written by Marianne Bertrand, Esther Duflo, Sendhil Mullainathan, this paper explains bias in the estimated standard errors introduced by serial correlations and discusses two simple techniques to solve the issue.

-

From the National Bureau of Economic Research and written by Timothy Conley, Christopher Taber, this paper provides a new method for inference in Difference-in-Difference models when there are a small number of policy changes. The paper demonstrates the diff-in-diff approach by analysing the effect of college merit aide programs on college attendance and shows that the standard approach can give misleading results in some cases.

References:

Gertler, P., Martinez, S., Premand, P., Rawlings, L., & Vermeersch, M. (2012). Impact Evaluation in Practice. World Bank Group.

Eric, (2019, March 30). Introduction to Difference-in-Differences Estimation. Aptech https://www.aptech.com/blog/introduction-to-difference-in-differences-estimation/

You may also like

Big Data: An Introduction and Application in the Social Sector

August 30, 2021

Building an Evaluation Ecosystem: Perspectives from Evaluation Associations - Key Takeaways from the Webinars

August 30, 2021

GENSA Celebrates One Year, and Keynote by Katherine Hay

August 30, 2021